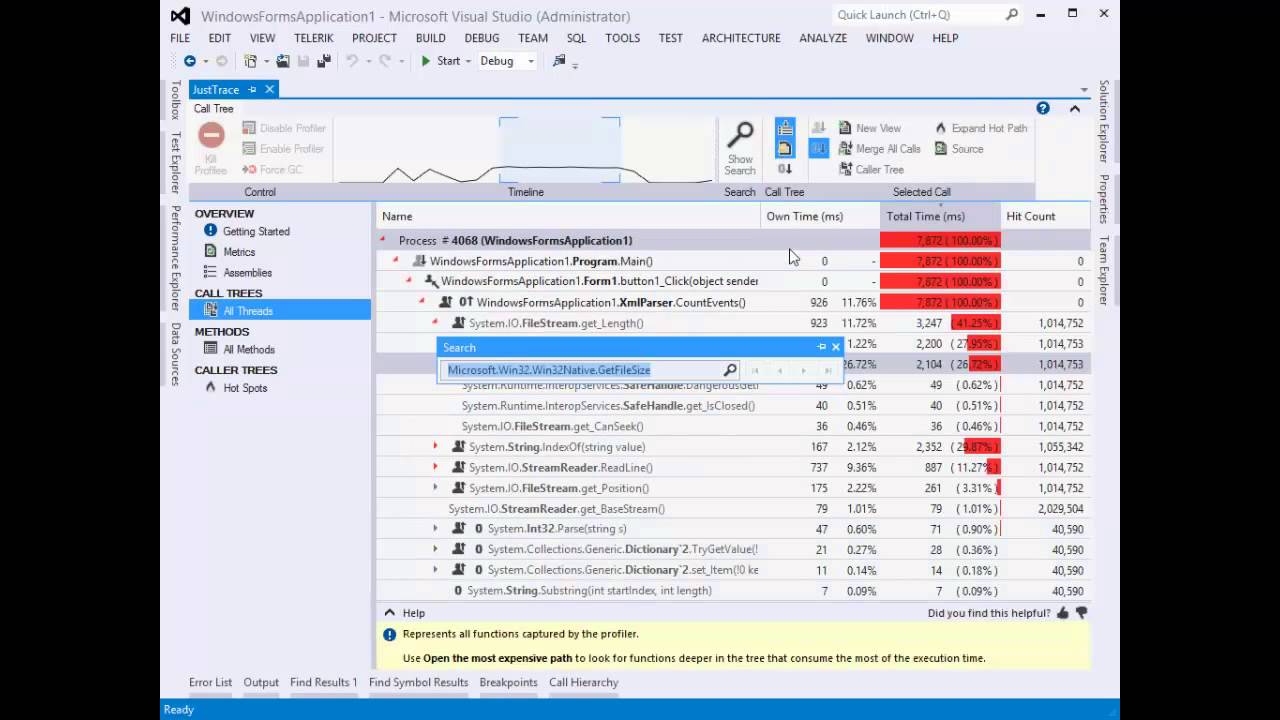

Recently I deal a lot with memory problems like leaks, stack/heap corruption, heap fragmentation, buffer overflow and the like. Surprisingly these things happen in the .NET world, especially when one deals with COM/PInvoke interoperability.

The CLR comes with a garbage collector (GC) which is a great thing. The GC has been around for many years and we accepted it as something granted and rarely think about it. This is a twofold thing. On one hand this is a prove that the GC does excellent job in most of the time. On the other hand the GC could become a big issue when you want to get the maximum possible performance.

I think it would be nice to explain some of the GC details. I hope this series of posts could help you build more GC friendly apps. Let’s start.

GC

I assume you know what GC is, so I won’t going to explain it. There are a lot of great materials on internet on this topic. I am going to state and the single and the most important thing: GC provides automatic dynamic memory management. As a consequence GC prevents the problems that were (and still are!) common in native/unmanaged applications:

- dangling pointers

- memory leaks

- double free

During the years, the improper use of dynamic memory allocation became a big problem. Nowadays many of modern languages rely on GC. Here is a short list:

| ActionScript |

Lua |

| AppleScript |

Objective-C |

| C# |

Perl |

| D |

PHP |

| Eiffel |

Python |

| F# |

Ruby |

| Go |

Scala |

| Haskell |

Smalltalk |

| Java |

VB.NET |

| JavaScript |

Visual Basic |

I guess more than 75% of all developers are programming in these languages. It also important to say that there are attempts to introduce a basic form of “automatic” memory management in C++ as well. Although auto_ptr, shared_ptr, unique_ptr have limitations they are a step in the right direction.

You probably heard that GC is slow. I think there are two aspects of that statement:

- for the most common LOB applications GC is not slower than manual memory management

- for real-time applications GC is indeed slower than well crafted manual memory management

However most of us are not working on real-time applications. Also not everyone is capable of writing high performance code, this is indeed hard to do. There are good news though. With the advent of the research in GC theory there are signs that GC will become even faster the current state-of-the-art manual memory management. I am pretty sure that in the future no one will pay for a real-time software with manual memory management; it will be too risky.

GC anatomy

Every GC is composed of the following two components:

The mutator is responsible for the memory allocation. It is called so because it mutates the object graph during the program execution. For example in the following pseudo-code:

string firstname = "Chris";

Person person = new Person();

person.Firstname = name;

the mutator is responsible for allocating the memory on the heap and updating the object graph by updating the field Firstname to reference the object firstname (we say that firstname is reachable from person through the field Firstname). It is important to say that these reference fields may be part from objects on the heap (as in our scenario from person object) but also may be contained in other object known as roots. The roots may be thread stacks, static variables, GC handles and so on. As a result from the mutator’s work any object can become unreachable from the roots (we say that such object becomes a garbage). This is where the second component comes.

The collector is responsible for the garbage collection of all unreachable objects and reclaim of their memory.

Let’s have a look at the roots. They are called roots because they are accessible directly, that is they are accessible to the mutator without going thought other objects. We denote the set of all roots objects as Roots.

Now let’s look at the objects allocated on the heap. We can denote this set as Objects. Each object O can be distinguished by its address. For simplification let’s assume that object fields can be only references to other objects. In really most of the object fields are primitive types (like bool, char, int, etc.) but these fields are not important for the object graph connectivity. It doesn’t matter if an int field has value 5 or 10. So for now let’s assume that objects have reference fields only. Let’s denote with |O| the number of the reference fields for the object O and with &O[i] denote the address of the i-th field of O. We write the usual pointer dereference for ptr as *ptr.

This notation allows us to define the set Pointers for an object O as

Pointers(O) ={ a | a=&O[i], where 0<=i<|O| }

For convenience we define Pointers(Roots)=Roots.

To recap – we have defined the following important sets:

- Objects

- Roots

- Pointers(O)

After we defined some of the most important sets, we are going to define the following operations:

- New()

- Read(O, i)

- Write(O, i, value)

New() operation obtains a new heap object from the allocator component. It simply returns the address of the allocated object. The pseudo-code for New() is:

New():

return allocate()

It is important to say that allocate() function allocates a continuous block of memory. The reality is a bit more complex. We have different object types (e.g. Person, string, etc.) and usually New() takes parameters for the object type and in some cases for its size. Also it could happen that there is not enough memory. We will revisit New() definition later. For simplification we can assume that we are going to allocate object of one type only.

Read(O, i) operation returns the reference stored at the i-th field of the object O. The pseudo-code for Read(O, i) is:

Read(O, i):

return O[i]

Write(O, i, value) operation updates the reference stored at the i-th field of the object O. The pseudo-code for Write(O, i, value) is:

Write(O, i, value):

O[i] = value

Sometimes we have to explicitly say that an operation or function is atomic. When we need so, we write atomic in front of the operation name.

Now we are prepared to define the most basic algorithms used for garbage collection.

Mark-sweep algorithm

Earlier I wrote that New() definition is oversimplified. Let’s revisit its definition:

New():

ref = allocate()

if ref == null

collect()

ref = allocate()

if ref == null

error "out of memory"

return ref

atomic collect():

markFromRoots()

sweep(HeapStart, HeapEnd)

The updated New() definition is bit more robust. It first tries to allocate memory. If there is not big enough continuous memory block it will collect the garbage (if there is any). Then it will repeat to allocate memory again. It could fail or succeed. What is important for this function definition is that it reveals when GC will trigger. Again, the reality is more complex but in general GC will trigger when the program tries to allocate memory.

Let’s define the missing markFromRoots and sweep functions.

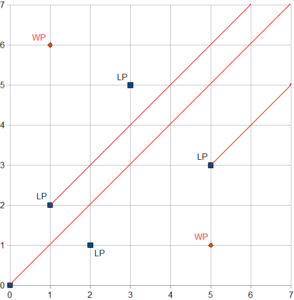

markFromRoots():

worklist = empty

foreach field in Roots

ref = *field

if ref != null && !isMarked(ref)

setMarked(ref)

enqueue(worklist, ref)

mark()

mark():

while !isEmpty(worklist)

ref = dequeue(worklist)

foreach field in Pointers(ref)

child = *field

if child != null && !isMarked(child)

setMarked(child)

enqueue(worklist, child)

sweep(start, end):

scan = start

while (scan < end)

if isMarked(scan)

unsetMarked(scan)

else free(scan)

scan = nextObject(scan)

The algorithm is straightforward and simple. It starts from Roots and marks each reachable object. Then it iterates over the whole heap and frees the memory of every unreachable object. Also it remove the mark of the remaining objects. It is important to say that this algorithm needs two passes over the heap.

The algorithm does not solve the problem with the heap fragmentation. This naive implementation doesn’t work well in real world scenarios. In the next post of this series we will see how we can improve it. Stay tuned.